Understanding the concept of 1 as a decimal is fundamental in mathematics and various fields that rely on numerical precision. Decimals are a way of expressing fractions and whole numbers using a base-10 system, where each digit represents a power of 10. This system is widely used in everyday life, from calculating change to performing complex scientific calculations. In this post, we will delve into the significance of 1 as a decimal, its applications, and how it relates to other numerical systems.

What is a Decimal?

A decimal is a number that includes a decimal point to separate the whole number part from the fractional part. For example, the number 3.14 is a decimal where 3 is the whole number part and 0.14 is the fractional part. Decimals are essential for representing values that are not whole numbers, such as measurements, percentages, and financial transactions.

Understanding 1 as a Decimal

When we talk about 1 as a decimal, we are referring to the number 1.0. This representation is straightforward: the whole number part is 1, and the fractional part is 0. The decimal point is included to emphasize that this is a decimal number, even though the fractional part is zero. This notation is crucial in various contexts, such as programming, where precision is key.

Applications of 1 as a Decimal

The concept of 1 as a decimal is used in numerous fields. Here are some key applications:

- Programming and Software Development: In programming, decimals are often used to represent floating-point numbers. Understanding 1 as a decimal helps in writing precise code, especially when dealing with financial calculations or scientific computations.

- Finance and Accounting: Decimals are essential in financial transactions, where precision is crucial. For example, calculating interest rates, taxes, and currency conversions often involves decimals.

- Science and Engineering: In scientific research and engineering, decimals are used to represent measurements with high precision. For instance, the speed of light is often expressed as a decimal to ensure accuracy.

- Everyday Life: Decimals are used in everyday activities such as shopping, cooking, and measuring distances. Understanding 1 as a decimal helps in making accurate calculations in these contexts.

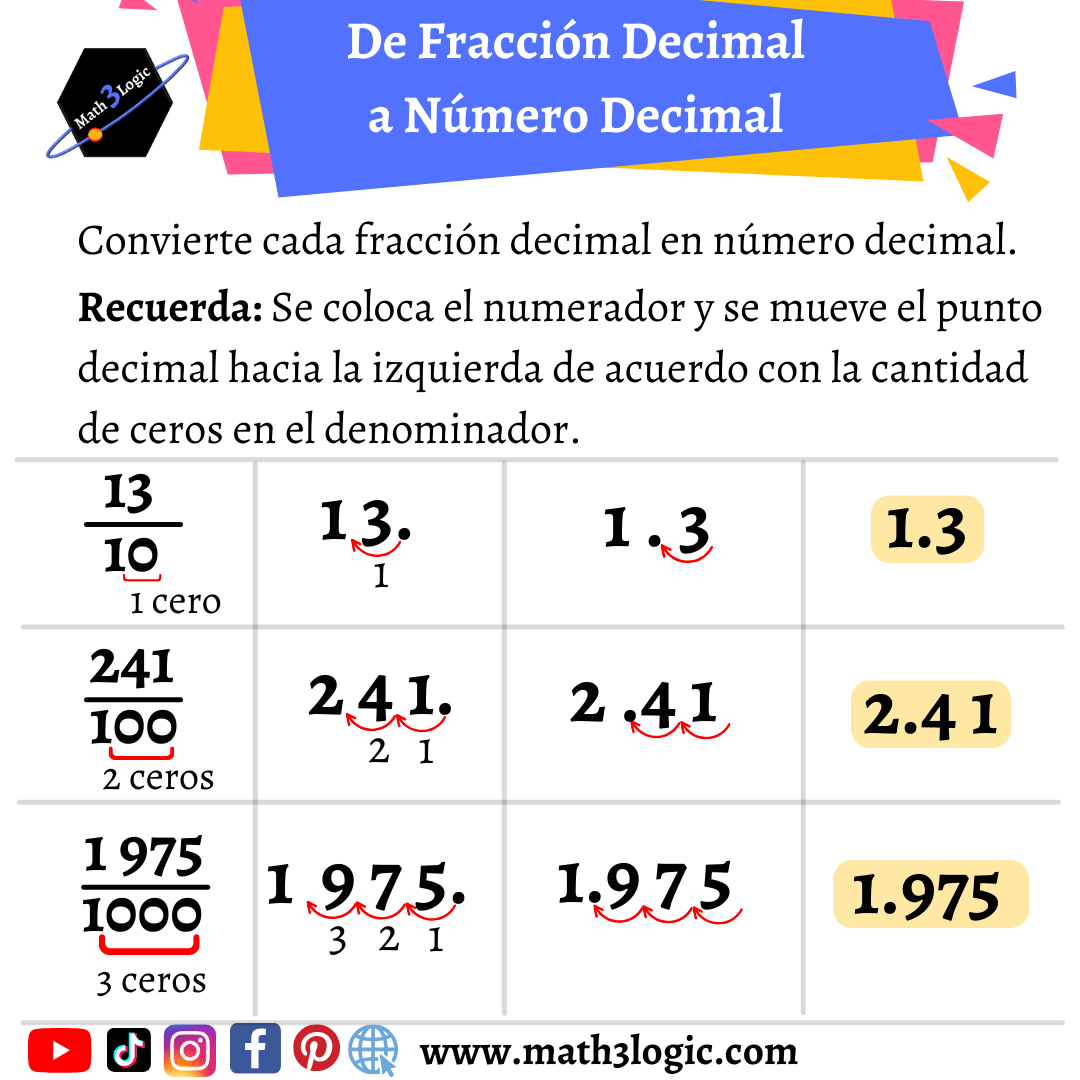

Converting Fractions to Decimals

Converting fractions to decimals is a common task in mathematics. To convert a fraction to a decimal, you divide the numerator by the denominator. For example, to convert the fraction 3⁄4 to a decimal, you divide 3 by 4, which gives you 0.75. This process is straightforward for simple fractions but can become complex for more intricate fractions.

Converting Decimals to Fractions

Converting decimals to fractions involves understanding the place value of each digit in the decimal. For example, the decimal 0.25 can be converted to the fraction 25⁄100, which simplifies to 1⁄4. This process is essential in various mathematical operations and ensures that decimals can be represented in fractional form when needed.

Importance of Precision in Decimals

Precision is crucial when working with decimals. Even a small error in a decimal value can lead to significant discrepancies in calculations. For example, in financial transactions, a slight error in a decimal value can result in substantial losses or gains. Therefore, understanding 1 as a decimal and ensuring precision in decimal calculations is essential in various fields.

Decimal Places and Rounding

Decimal places refer to the number of digits after the decimal point. For example, the number 3.14 has two decimal places. Rounding is the process of approximating a decimal to a certain number of decimal places. For instance, rounding 3.14159 to two decimal places gives 3.14. Understanding decimal places and rounding is crucial in various applications, such as scientific research and financial calculations.

Common Mistakes in Decimal Calculations

There are several common mistakes that people make when working with decimals. Some of these include:

- Misplacing the Decimal Point: This is a common error that can lead to significant discrepancies in calculations. For example, writing 1.0 as 10.0 or 0.1 can change the entire outcome of a calculation.

- Incorrect Rounding: Rounding errors can occur when approximating decimals to a certain number of decimal places. It is essential to follow the correct rounding rules to avoid these errors.

- Ignoring Significant Figures: Significant figures are the digits in a number that carry meaningful information. Ignoring significant figures can lead to inaccurate calculations. For example, in the number 0.0034, the significant figures are 3 and 4.

📝 Note: Always double-check your decimal calculations to ensure accuracy and precision.

Decimal Representation in Different Numerical Systems

Decimals are not limited to the base-10 system. They can be represented in different numerical systems, such as binary, octal, and hexadecimal. Understanding how decimals are represented in these systems is essential in fields like computer science and digital electronics.

Binary Decimals

In the binary system, decimals are represented using only two digits: 0 and 1. For example, the decimal number 1.0 in binary is represented as 1.0. This system is fundamental in computer science, where all data is represented in binary form.

Octal and Hexadecimal Decimals

In the octal system, decimals are represented using eight digits: 0 to 7. For example, the decimal number 1.0 in octal is represented as 1.0. In the hexadecimal system, decimals are represented using sixteen digits: 0 to 9 and A to F. For example, the decimal number 1.0 in hexadecimal is represented as 1.0. These systems are used in various applications, such as programming and digital electronics.

Decimal Representation in Programming

In programming, decimals are often represented using floating-point numbers. Floating-point numbers are a way of representing real numbers in a computer’s memory. Understanding how decimals are represented in programming is essential for writing precise and efficient code.

Floating-Point Precision

Floating-point precision refers to the number of significant digits that can be represented in a floating-point number. For example, a 32-bit floating-point number can represent up to 7 significant digits, while a 64-bit floating-point number can represent up to 15 significant digits. Understanding floating-point precision is crucial in fields like scientific research and financial calculations, where high precision is required.

Common Floating-Point Errors

There are several common errors that can occur when working with floating-point numbers. Some of these include:

- Rounding Errors: Rounding errors can occur when approximating floating-point numbers to a certain number of significant digits. It is essential to follow the correct rounding rules to avoid these errors.

- Overflow and Underflow: Overflow occurs when a floating-point number exceeds the maximum value that can be represented. Underflow occurs when a floating-point number is too small to be represented. These errors can lead to significant discrepancies in calculations.

- Precision Loss: Precision loss can occur when performing arithmetic operations on floating-point numbers. For example, adding two large floating-point numbers can result in a loss of precision.

📝 Note: Always be aware of the limitations of floating-point numbers and take steps to minimize errors in your calculations.

Conclusion

Understanding 1 as a decimal is fundamental in mathematics and various fields that rely on numerical precision. Decimals are a way of expressing fractions and whole numbers using a base-10 system, where each digit represents a power of 10. This system is widely used in everyday life, from calculating change to performing complex scientific calculations. By understanding the concept of 1 as a decimal, its applications, and how it relates to other numerical systems, we can ensure precision and accuracy in our calculations. Whether in programming, finance, science, or everyday life, decimals play a crucial role in representing values with high precision. Therefore, it is essential to have a solid understanding of decimals and their applications to excel in various fields.

Related Terms:

- convert fraction to decimal chart

- 1 as a fraction

- what is 1% equal to

- 1 as a decimal number

- 1 2 % to decimal

- 1 as a percentage